Superforecasting

What a physicist's party trick taught me about the most underrated skill in consulting.

A client asked me once how many people her organization would need to hire to double their canvassing program across three locations. She had a goal and a timeline but no staffing models or historical data.

I didn’t have the answer, either, but I had a method: break the question into smaller pieces. How many doors per day can one canvasser knock? How many contact attempts does it take to get a completed conversation? What’s the realistic knock rate in urban versus rural areas, and what’s the split across those three states? How many working days are in the timeline? None of these sub-questions were hard to estimate. Combined, they gave us a staffing range that held up well enough to build a budget around.

I do some version of this on most engagements. A nonprofit wants to know whether a revenue target is realistic. A campaign director wants to know if there’s enough time to hit a persuasion goal before Election Day. An executive director wants to know how many staff a new program actually requires versus how many she wishes it required. I almost never have clean data for these questions. What I have is the ability to take the big unanswerable thing and decompose it into a set of smaller, answerable things.

It turns out this has a name. Enrico Fermi, the physicist, used to pose problems like this to his students, like “How many piano tuners are there in Chicago?” No one actually knows the real answer to this question — it’s not tracked anywhere, and they checked! But you can get in the ballpark if you estimate the population, the percentage of households with a piano, how often a piano needs tuning, and how many tunings one tuner can do in a year, and suddenly you’re close. The technique doesn’t produce a precise answer , but it does produce a range, and a range is almost always more useful than a pure guess.

I learned about Fermi problems from Philip Tetlock and Dan Gardner’s book Superforecasting, which I want to spend some time on here because it reframed how I think about almost every part of the consulting relationship.

The forecasters who weren’t supposed to be good at this

Tetlock ran a large-scale research project called the Good Judgment Project that tracked thousands of people making predictions about geopolitical events over several years. A small subset of forecasters were consistently more accurate than the rest. They outperformed not just average participants but intelligence analysts with access to classified information. Tetlock called them superforecasters.

What set them apart was a set of habits: comfort with probability rather than certainty, a discipline of breaking problems into estimable parts rather than reasoning from gut feeling, willingness to update beliefs in small increments as new information arrives, and the humility to treat their own confidence as something to be calibrated rather than trusted.

That last one is the one I think about often. Superforecasters are people who maintain a more accurate picture of what they don’t know. They hold their beliefs loosely enough to revise them, and tightly enough to act on them. That balance — conviction without rigidity — is the posture I’m trying to maintain every time I walk into a client’s organization and start forming a diagnosis.

The Good Judgment Project’s website has a solid overview of the research (not paywalled). The book is a better read than most popular science. Gardner is a skilled co-writer and the narrative moves. But the framework itself is what I want to pull on here, because once I started seeing consulting through the lens of estimation under uncertainty, a bunch of other things I’d been reading clicked into place.

Why organizations can’t do what they know they should

One of Tetlock’s findings is that knowing the right approach doesn’t mean people will use it. Superforecasters have to fight their own cognitive biases constantly: the pull of overconfidence, the comfort of early conclusions, or the temptation to stop updating once you’ve landed on a view.

This dynamic is the subject of one of a great article, Alec Lewis’s piece in The Athletic, “NFL teams know the best way to draft, so why aren’t they doing it?” (gift article so you can get through the NYT paywall). Researchers including Nobel laureate Richard Thaler demonstrated nearly twenty years ago that NFL teams systematically overvalue high draft picks and would be better off accumulating more picks by trading down. The probability that any given pick outperforms the next player chosen at the same position is barely better than a coin flip. This research is well known, but most teams keep doing the opposite.

Lewis interviews fourteen general managers, coaches, scouts, and analytics staffers, and what emerges is a portrait of organizational decision-making that will be painfully familiar to anyone who consults with nonprofits. The GM is focused on job security more than long-term roster building. The coach believes he can develop raw talent better than the data suggests. The scouts want to justify the months they spent evaluating a prospect. Ownership understands the logic intellectually but can’t resist the emotional pull of a big, exciting pick. And there’s a culture-wide reluctance to admit that outcomes are more random than anyone wants to believe, because admitting that means admitting that your expertise is less predictive than you thought.

I’ve been the consultant holding a well-supported recommendation and watching a client choose the opposite. For a long time I interpreted that as a failure of persuasion on my part, or stubbornness on theirs. This article helped me see it differently: as a system of competing pressures that makes the irrational choice feel rational to the people inside it. The Fermi-style decomposition can produce the right answer, but the right answer still has to survive the organization.

Where good analysis goes to die

Which brings me to a piece I think every consultant should read: W. Chan Kim and Renée Mauborgne’s “Fair Process: Managing in the Knowledge Economy,” originally published in Harvard Business Review in 1997.

Their central finding: people will commit to a decision they disagree with if they believe the process that produced it was fair. And they will sabotage a decision they agree with if they feel the process was unfair.

Kim and Mauborgne identify three principles at play in group processes: engagement (people affected by the decision had input), explanation (they understand why the decision was made), and expectation clarity (they know what’s expected of them going forward). The article includes a case study where identical changes were introduced at two manufacturing plants, one with fair process and one without. The plant that skipped it nearly fell apart, and the plant that practiced it transformed successfully.

This connects to the Superforecasting framework in a way I didn’t see at first. Tetlock’s superforecasters are good at getting to the right answer. But the right answer, deployed without fair process, often produces worse outcomes than a mediocre answer that everyone helped develop. I’ve delivered strategies that gathered dust, and when I look back at why, the explanation is almost always here: not in my analysis, but in how I presented it, who felt consulted, and whether the people responsible for implementation understood the reasoning behind the recommendation or just received the conclusion.

We wrote about the organizational mechanics of this in our Strategic Agility series. The management layer between strategy and execution is exactly where fair process lives or dies. (I think you can get the HBR article if you sign up for a free account. You get a few articles for free each month that way.)

The decisions that don’t deserve this much anguish

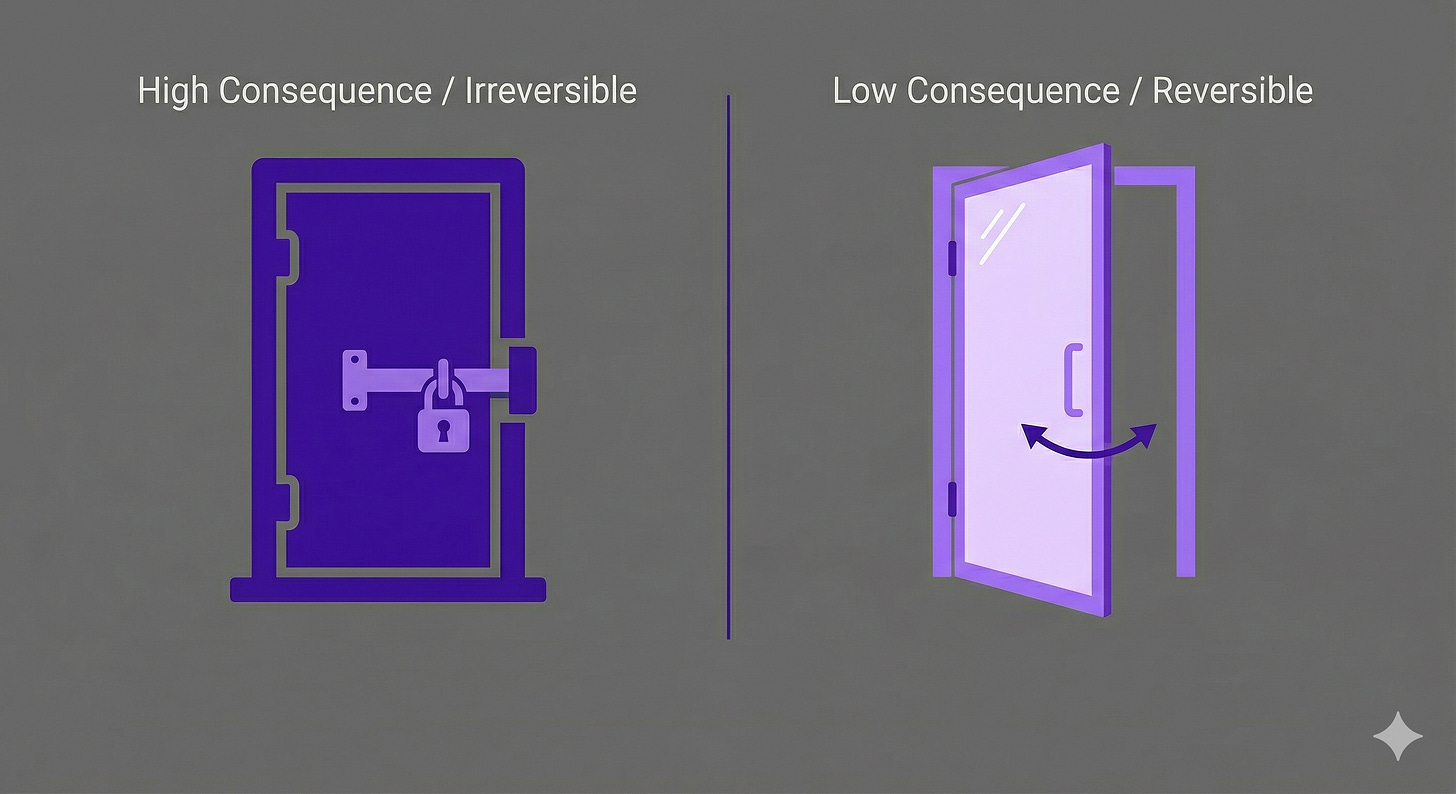

One more dimension of the uncertainty problem. Jeff Bezos, in a short section of Invent and Wander called “Disagree and Commit,” makes a distinction I now use with clients often: one-way doors versus two-way doors.

One-way doors are irreversible, high-consequence decisions. They deserve slow deliberation, multiple perspectives, and careful analysis. Two-way doors are reversible. If the decision turns out to be wrong, you can walk back through. Bezos’s observation is that as organizations grow, they start applying the slow, heavyweight one-way-door process to everything. The result is not better decisions but rather paralysis.

This maps directly onto the Fermi mindset. The Fermi approach says: I don’t need perfect data, I need a useful range. The Bezos framework says: I don’t need certainty about this decision, because if I’m wrong I can reverse it. Both are about calibrating the amount of deliberation to the actual stakes, and both require an honest assessment of how much you know and how much the decision actually costs if you’re wrong.

Most of the decisions I watch stall nonprofit organizations for weeks are two-way doors being treated like one-way doors. We built on this in our Strategic Agility piece on decision-making under uncertainty, where we turned the distinction into a practical tool called the Reversibility Test.

The room where it happens (badly)

There’s one more reading I want to mention, because it addresses the place where all of this thinking actually has to happen: the meeting.

Antony Jay’s “How To Run a Meeting,” published in Harvard Business Review in 1976 (I know), has aged better than almost anything I’ve read on the subject. Jay wrote a theory of what meetings are for — why human beings need them, what functions they perform that nothing else can replace, and how a chair should think about the role.

His most useful contribution is a taxonomy of what every agenda item is actually trying to accomplish: is it informative (share and discuss), constructive (generate something new), executive (assign responsibilities), or legislative (change the operating framework)? I’ve started mentally categorizing agenda items this way before meetings, and it clarifies why some meetings go nowhere, because nobody agreed on what the conversation was supposed to produce.

This matters for the Fermi problem, too. The moment of estimation and the moment of diagnosis almost always happen in a room with other people. The quality of the estimate depends not just on the thinking but on how the room is structured: who’s in it, whether junior voices are heard before senior voices anchor the conversation, or whether the chair has sorted out what each discussion is supposed to produce. Jay gives a structure for that. Susannah and I touched on some of this in our piece on why most meetings don’t need to exist.

One more, for the bookshelf

Chris Zook and James Allen’s The Founder’s Mentality doesn’t connect to the Fermi framework as directly, but it’s worth including because it gives a diagnostic vocabulary for the organizational contexts where all of this plays out. Their argument: growth creates complexity, complexity creates internal dysfunction, and internal dysfunction kills the very thing that made the organization successful. They identify three predictable crises — overload, stall-out, and free fall — and most of the nonprofits I work with are somewhere in the first two without a name for what’s happening.

Having that name changes the engagement. Instead of solving symptoms, I can point at a pattern and say: this is overload, and here’s what usually comes next if we don’t address it. (The introduction and first chapter are enough to get the framework. The rest is case studies — useful but skippable if you’re short on time.)

The skill underneath all of it

What connects everything on this list is a single problem: how do you think clearly when you don’t have the information you want?

Fermi problems teach the method — decompose, estimate, combine. Superforecasting teaches the disposition — hold your beliefs loosely, update them often, calibrate your confidence. Fair process teaches the delivery — the best analysis in the world fails if the people who have to implement it don’t feel the process was legitimate. Bezos teaches the triage — not every decision deserves the same amount of anguish. Jay teaches the container — the meeting is where thinking either sharpens or falls apart. And Zook and Allen teach the context — the organizational dynamics that make clear thinking harder as organizations grow.

None of these were written for consultants. All of them describe what I actually do for a living more accurately than any consulting book I’ve read.

If you’ve come across something recently that changed how you think about the work — a book, an article, a paper from some other field entirely — reply to this email and tell me what it is. The best recommendations will show up in a future issue.